Feature stores are the new cool kids in the neighbourhood of Data engineering and AI (artificial intelligence). Hyperscale AI companies (such as Uber, Netflix) have built their own feature stores to solve the problems of reusing, governing and securing access to features (data for AI) in a shared platform. Hopsworks is a modular open-source platform for managing data for AI (a standalone Feature Store), computing features (Spark, Python), and training models. In this post, we introduce the project-based multi-tenancy security model in Hopsworks for users, data, and programs. We describe how our project-based multi-tenant security model is, in effect, a form of dynamic role-based access control with zero performance overhead.

Hopsworks.ai is a SaaS version of Hopsworks, currently available on AWS. Hopsworks clusters can be run with an IAM profile, providing them with an identity in AWS with permission policies that capture what operations the Hopsworks cluster is authorized to perform in AWS - such as read/write data in S3 buckets. Hopsworks can also be used from Data Science and Feature Engineering platforms (Databricks, Sagemaker, KubeFlow, EMR) using API keys exported from Hopsworks.

This post is concerned primarily with the internal security model in Hopsworks that enables you to host sensitive data in a shared cluster, providing powerful access control and self-service capabilities. The benefit of Hopsworks project-based multi-tenancy model is that you can host many users and feature stores (and other projects) on a single cluster, with self-service access to different feature stores. The advantage of our security model is that you can host production, staging, and development feature stores in a single cluster - you do not need to manage and pay for separate clusters.

Role-based access control (RBAC) is a well-known security model that enables administrators to give a group of users the same access rights to selected resources. With roles, an administrator at a company could define a single security policy and apply it to all members of a department. But individuals may be members of multiple departments, so a user might be given multiple roles. Dynamic role-based access control means that, based on some other policy, you can change the set of roles a user can hold at a given time. For example, if a user has two different roles - one for accessing banking data and another one for accessing trading data, with dynamic RBAC, you could restrict the user to only allow her to hold one of those roles at a given time. The policy for deciding which role the user holds could, for example, depend on what VPN (virtual private network) the user is logged in to or what building the user is present in. In effect, dynamic roles would allow to hold only one of the roles at a time and sandbox her inside one of the domains - banking or trading. It would prevent her from cross-linking or copying data between the different trading and banking domains.

Hopsworks implements a dynamic role-based access control model through a project-based multi-tenant security model. Inspired by GDPR, in Hopsworks a Project is a sandboxed set of users, data, and programs (where data can be shared in a controlled manner between projects). Every Project has an owner with full read-write privileges and zero or more members.

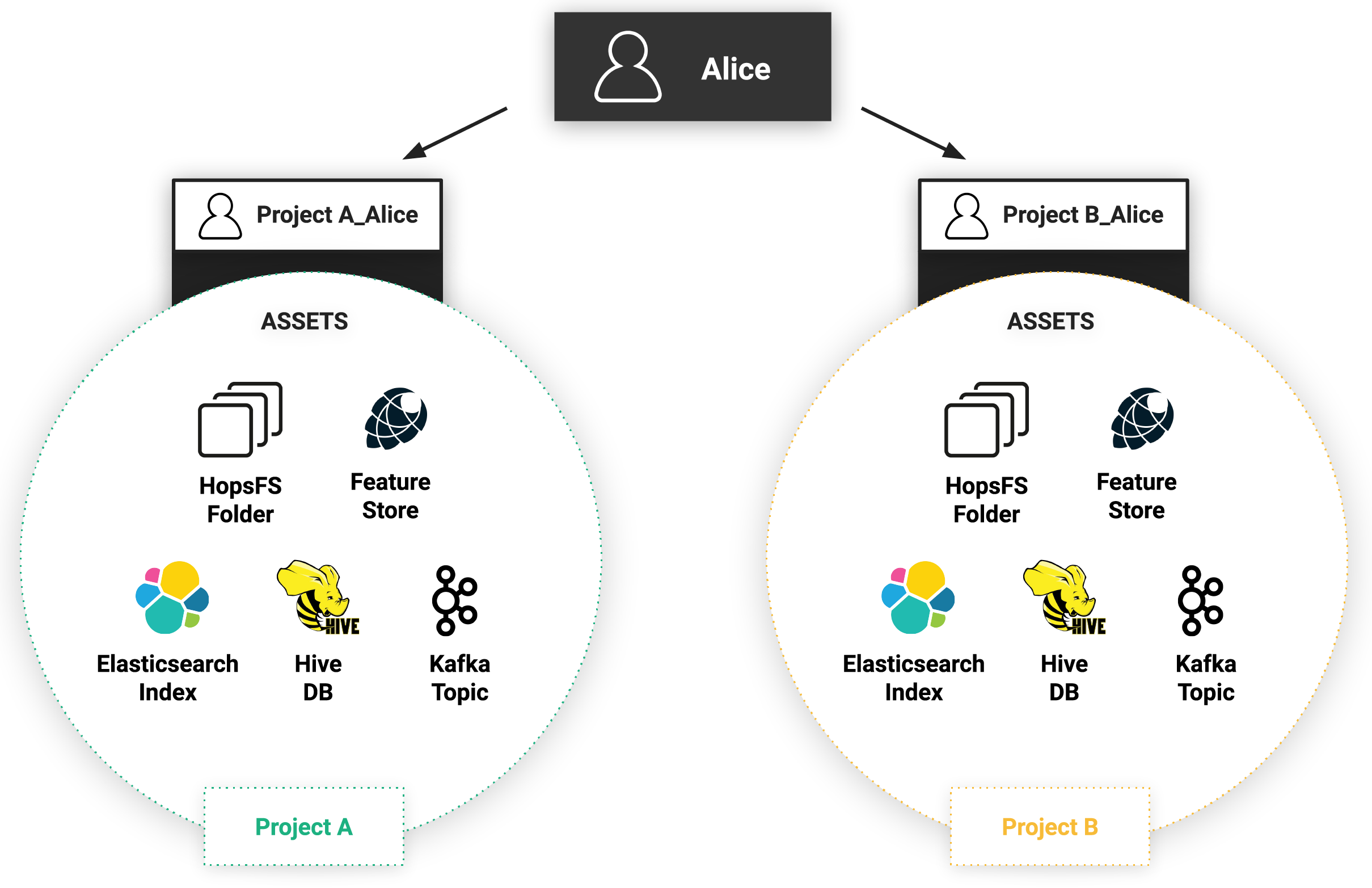

A project owner may invite other users to his/her project as either a Data Scientist (read-only privileges and run jobs privileges) or Data Owner (full privileges). Users can be members of (or own) multiple Projects, but inside each project, each member (user) has a unique identity - we call it a project-user identity. For example, user Alice in Project A is different from user Alice in Project B - (in fact, the system-wide (project-user) identities are ProjectA__Alice and ProjectB__Alice, respectively). As such, each project-user identity is effectively a role with the project-level privileges to access data and run programs inside that project. If a user is a member of multiple projects, she has, in effect, multiple possible roles, but only one role can be active at a time when performing an action inside Hopsworks. When a user performs an action (for example, runs a program) it will be executed with the project-user identity. That is, the action will only have the privileges associated with that project. The figure below illustrates how Alice has a different identity for each of the two projects (A and B) that she is a member of. Each project contains its own separate private assets. Alice can use only one identity at a time which guarantees that she can’t access assets from both projects at the same time

An important aspect of Project based multi-tenancy is that assets can be shared between projects - sharing does not mean that data is duplicated. The current assets that can be shared between projects are: files/directories in HopsFS, Hive databases, feature stores, and Kafka topics. For example, in the figure below there are three users (User1, User2, and User3) and two projects (A and B). User1 is a member of project A, while User2 and User3 are members of project B. All three users (User1, User2, User3) can access the assets shared between project A and project B. As sharing does not mean copying, the access control rules for the asset are updated to give users in the other project read or write permissions on the shared asset.

As we will see later on, project-user identity is based on a X.509 certificate issued internally by Hopsworks. Access control policies, however, are implemented by the platform services:

When a user authenticates with Hopsworks, they are logged into the platform with a Hopsworks user identity. This user identity is needed to be able to construct the project-user identity - it is the “user” part of the project-user identity. In Hopsworks, a user-identity is mapped to a global Hopsworks role (independent of project membership) - a normal user or an administrator. A normal user can search for assets, update her profile, generate API keys, and change to/from projects. Administrators have access to user, project, storage, and application management pages, system monitoring and maintenance services. They can activate or block users, delete Projects, manage Project quotas, promote normal users to administrators, and so on. It’s important to mention here that a Hopsworks administrator cannot view the data inside a project - even if they are allowed to delete a project.

A user interacts with Hopsworks through the web application and they don’t necessarily realize that the web application is a facade to a modular distributed system. In the background we run our POSIX-compliant distributed filesystem, our cluster management and scheduler, a distributed in-memory database RonDB, OpenSearch and others.

A fundamental principle in every distributed system is that processes exchange messages over the network or through shared state (such as a filesystem or database). When communication is performed by message passing, it is imperative that we protect the messages from adversaries reading or modifying their content and validate the identity of the caller. Traditionally in Hadoop, they use Kerberos and GSSAPI to authenticate and authorize users and encrypt data in-transit. While Kerberos (Active Directory) is widely adopted by big organizations, the administration of users and services is a painful process and the system does not scale. On top of that Kerberos APIs are so complicated that programming against them can be really challenging.

To avoid the pain of Kerberos (and be able to natively integrate with platforms like Kubernetes), we completely re-designed the security model of our core systems to use X.509 certificates. We replaced Kerberos with Public Key Infrastructure (PKI) with X.509 certificates to authenticate and authorize users. Certificates enabled us to also use the well established TLS protocol to provide confidentiality and data integrity. Every user and every service in a Hopsworks cluster has a private key and an X.509 certificate.

Hopsworks supports a number of stateful and compute services that use X.509 certificates to authenticate users, applications, and services: HopsFS, HiveServer2, Kafka, YARN. These services all provide their own authorization schemes. We unified HopsFS and Hive’s authorization models by providing 2-way TLS in HiveServer2 and storage based authorization for the Hive metastore, to delegate access control decisions for Hive to HopsFS. In Hive, tables and databases store their data files inside directories on HopsFS, so HopsFS ACLs (access control lists) authorize file system operations by Hive (read from tables, write to tables). The easy-to-understand ACLs that we expose in Hopsworks (for Hive, and datasets in HopsFS) are captured in two roles: Data Scientists can read, Data Owners can read/write. HopsFS ACLs can be customized directly in Hopsworks from version 1.4.

HopsYARN uses X.509 certificates to identify users. HopsYARN also creates and manages (including renewal) application certificates for YARN applications (such as Spark jobs).

Hopsworks projects also support two other multi-tenant services that are not currently backed by X.509 certificates: OpenSearch and the Online Feature Store (RonDB, MySQL Cluster).

Hopsworks uses JSON Web Tokens (JWT) for authentication/authorization in OpenSearch. For every Hopsworks project, a number of private indexes are created in OpenSearch: an index for real-time logs of applications in that project (accessible via Kibana), an index for ML experiments in the project, and an index for provenance for the project’s applications and file operations. Currently, we do not support sharing indexes across projects - they are private to the project. MySQL Cluster is the online feature store in Hopsworks, and details on how we provide multi-tenant access to the online feature store using user credentials is provided later in this post.

As we saw in the previous section, most multi-tenant services in Hopsworks are built on X.509 certificates. Hopsworks comes with its own root Certificate Authority (CA) for signing certificates internally. Hopsworks root CA does not directly sign requests, instead it uses an intermediate CA to do so. To protect against a security breach, more than one intermediate CA can be made responsible for a specific domain. As shown in the above figure, there is one intermediate CA for creating API and Kubelet certificates that can be used to access an external Kubernetes cluster (more details on integration with Kubernetes are provided later in this post) and another Hopsworks intermediate CA for user, application, and service certificates inside Hopsworks.

Hopsworks intermediate CA supports three kinds of certificates: User, Application and Service certificates. They are all signed by the same Hopsworks intermediate CA but have different lifespan and attributes. In the following sections we are going to dig deeper on how they are issued, their lifecycle and how they’re used.

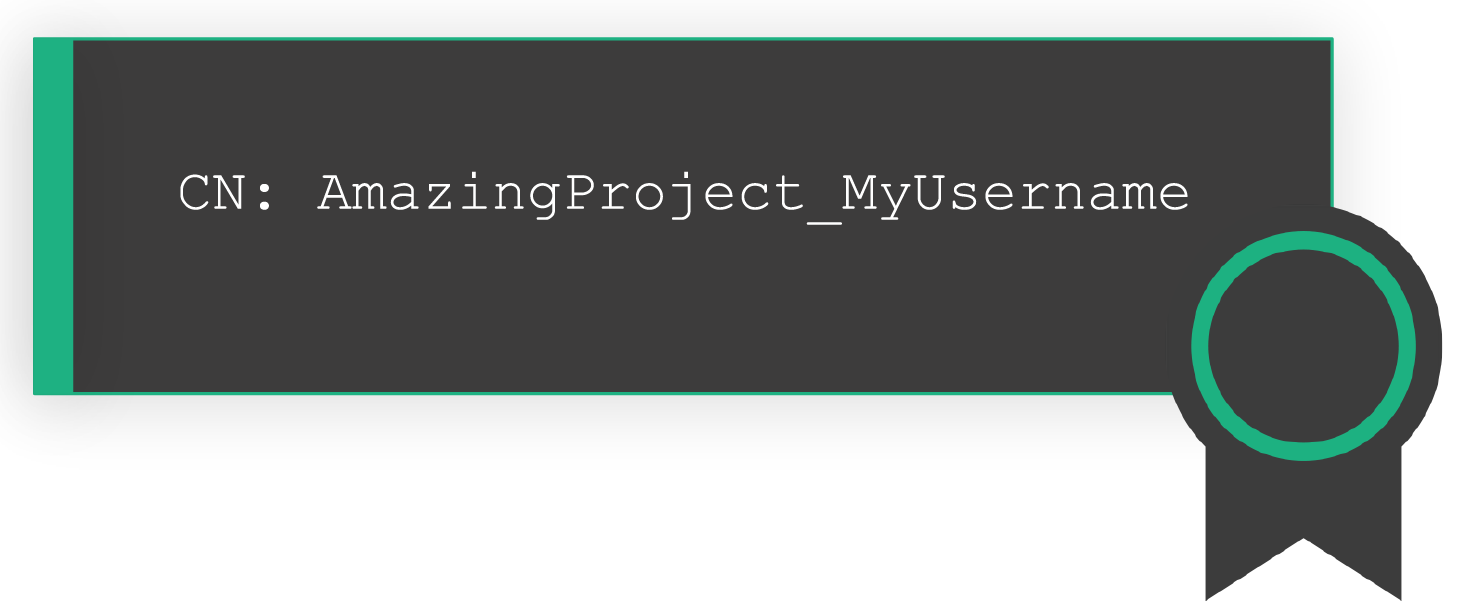

When a user in Hopsworks becomes a member of a project (either when they create their own project or are added as a member to a project), a new user is created behind the scenes. This new user uniquely identifies a user belonging to a specific Project, a project-user ID. The username of this project-user is in the form of PROJECTNAME__USERNAME.

For each project-user, we automatically generate an X.509 certificate and a private key. The certificate contains the user’s username to the Common Name field of the X.509 Subject to authenticate the user.

Certificates and private keys are stored in the database and pulled when needed to perform RPCs or REST calls (a caching mechanism is set in place to avoid round-trips to the database). All private keys are stored encrypted in the database. User certificates are used for all operations that are not executed within the context of an application but still access the data managed by Hopsworks. For example, a user in Hopsworks who previews features of an Offline Feature Store or when a user submits an application to the scheduler. These certificates can also be securely extracted from Hopsworks and be used by our hsfs client library for authentication in conjunction with API keys.

A user will launch a job which will read/write data from/to HopsFS, negotiate for more resources in the cluster, write to the feature store, or produce to a Kafka topic. To support fine-grained access control, lineage, and provenance, we issue a new set of cryptographic material for every application. The application certificate contains the username of the user who submitted the job and a unique identifier for the current application. With this information we are now able to log information about who created or modified a specific file and with which application, or in machine learning, we can infer which application read/wrote this feature data.

Application certificates are transparent to the user. HopsYARN is responsible for their lifecycle. When a new application is submitted to the cluster, a new X.509 certificate is generated and signed by Hopsworks intermediate CA. The cryptographic material is shipped automatically to all containers and revoked once the application has finished. To minimize the attack vector, HopsYARN will periodically rotate them. It will generate a new pair, ship them to already running containers, and revoke the previous set.

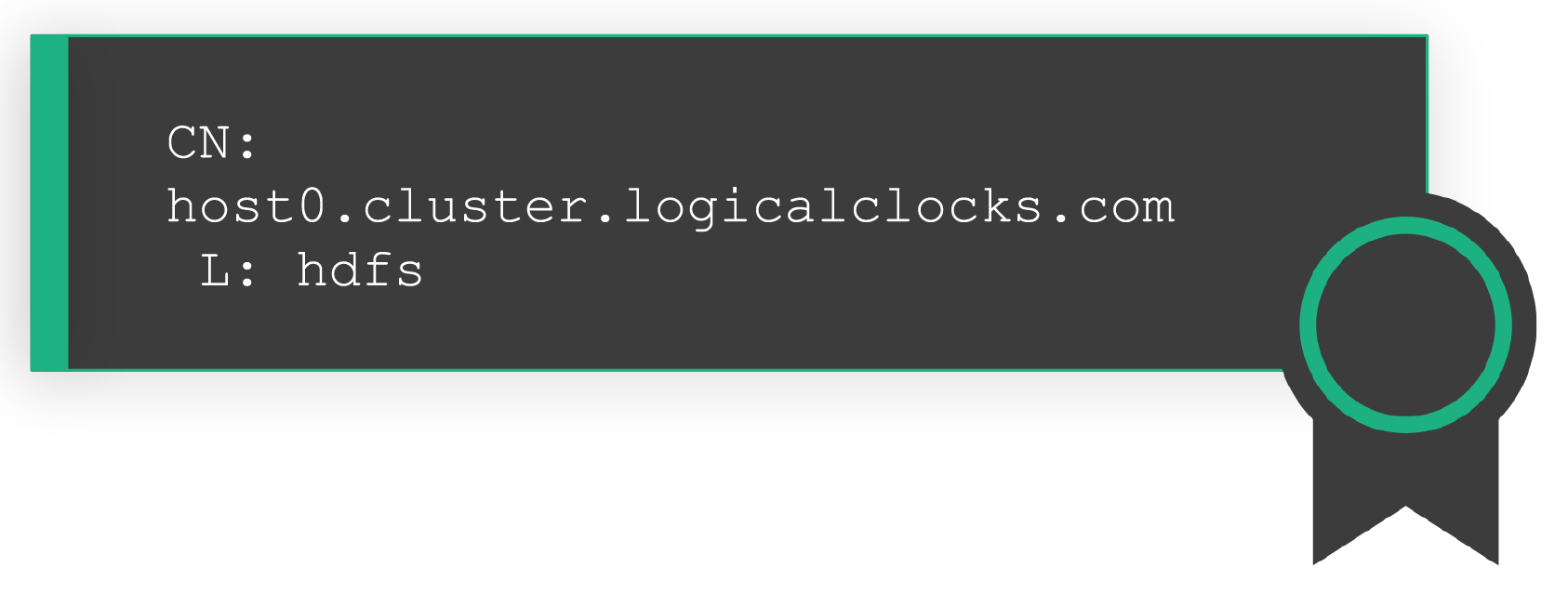

Finally, the last type of Hopsworks certificate is the one used by the services themselves. Services will communicate with each other using their own certificate to authenticate and encrypt all traffic. Each service in Hopsworks, that supports TLS encryption and/or authentication, has its own service-specific certificate. Service certificates contain the Fully Qualified Domain Name (FQDN) of the host they are installed on, the service discovery domain names they are issued for and the login name of the system user who’s supposed to run the process. They are generated when a user provisions Hopsworks - either with a fresh on-prem installation or by spinning up a cluster on managed.hopsworks.ai- and in general they have a long lifespan. Service certificates can be rotated automatically in configurable intervals or upon request of the administrator. The services in Hopsworks that have their own X.509 certificates for encrypting their network traffic:

The services in Hopsworks that support two-way X.509 certificates for both encrypting their network traffic and client-side authentication:

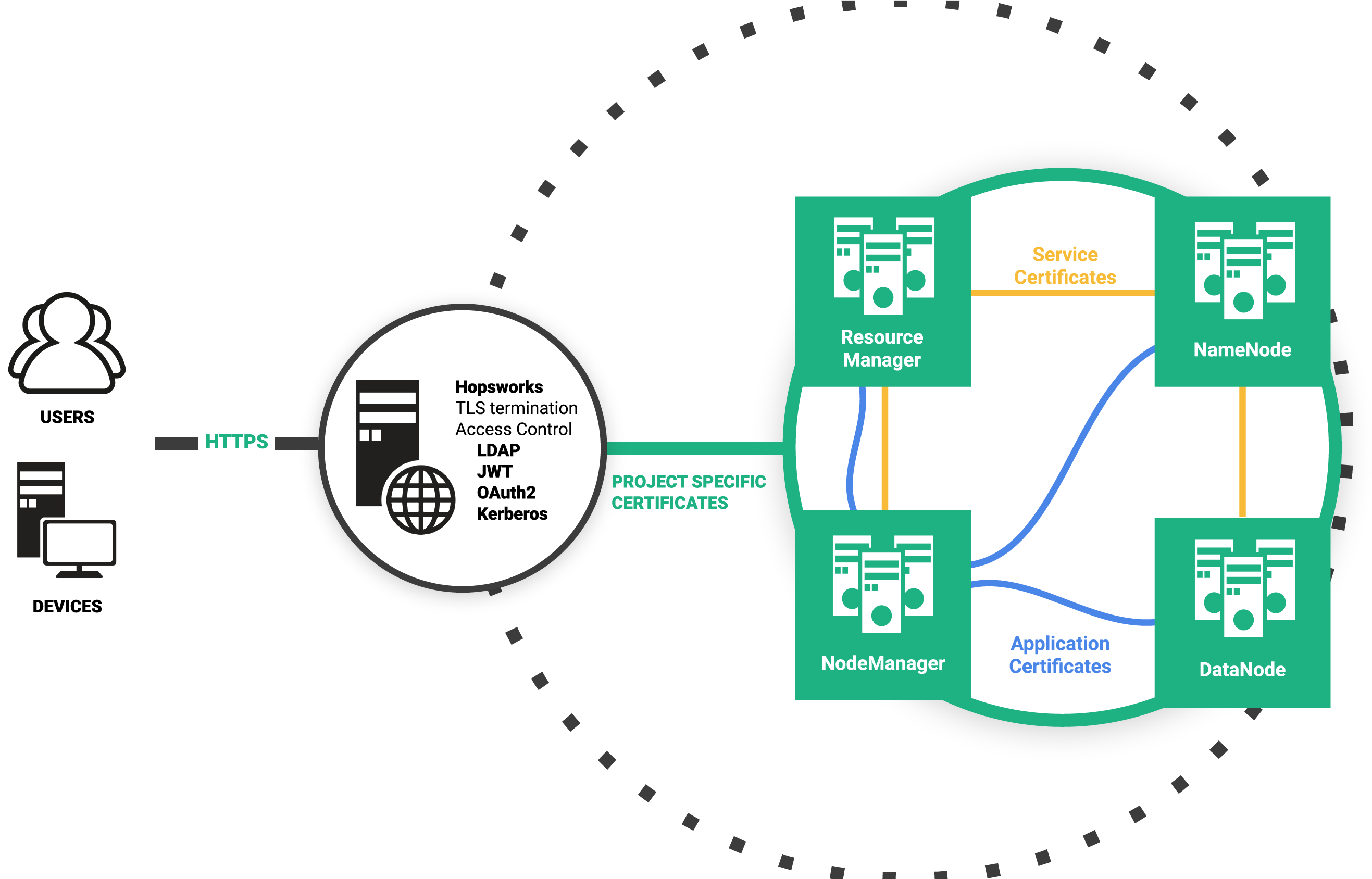

So, now that we’ve outlined the building blocks for our security model, we will see how we actually protect all data in-transit. All backend services exchange messages with each other. Both servers and clients require all communication to be done over TLS with 2-way authentication, that is both entities should present a certificate.

The server will check if the client’s certificate is still valid and trusted. It will also validate the peer’s certificate against a Certificate Revocation List and if the certificate has been revoked, it will drop the connection. As a last step of protection for project-user and application certificates, the username that is encoded in the message - which is the effective user performing an action - is validated against the Common Name field of the X.509 certificate. That way a rogue user cannot impersonate other users. In server-to-server communication, service certificates are used. Remember the FQDN in the certificate? The receiver will do a DNS lookup (result is cached) and the answer should match the incoming IP address, so an adversary can’t join a faulty node in the cluster. Finally, the Locality (L) field of a Service X.509 certificate should also match the username of an incoming RPC.

Hopsworks Certificate Authority keeps a list of revoked certificates. Each time a project is deleted, or a user is removed from a project or a job finishes or when a certificate is rotated, Hopsworks updates its CRL and digitally signs it. All backend services periodically fetch the CRL and refresh their internal data structures so that connections with revoked certificates will be dropped.

In Hopsworks.ai, the filesystem will use an object storage solution as the storage engine. For example in AWS, S3 will be used which besides the advantage of lowering cost, it also provides encryption-at-rest for files stored in buckets. For on-premises HopsFS, data can be stored encrypted-at-rest using ZFS native encryption support. Hopsworks can centrally manage encryption keys for ZFS pools (volumes), so that encryption keys are not stored on the storage hosts. As services in Hopsworks run in user-mode in Linux, the platform can be configured to ensure that administrators are not able to read file data.

Hopsworks and OpenSearch use JWT for authentication. Similar to application X.509 certificates, HopsYARN issues a token for each submitted job and propagates it to running containers. User code can then securely make REST calls to Hopsworks API or to OpenSearch indexes owned by the project. The JWT is rotated automatically before it expires and invalidated once the application has finished.

A general overview of the security architecture of Hopsworks with all the artifacts we’ve discussed so far is depicted in the figure below.

For every online feature store, a new database is created in RonDB to store the online features. The credentials for accessing that database are created by Hopsworks and securely stored in the database, encrypted by a master key. Clients of the online feature store, such as external online applications, use their API key to contact Hopsworks and retrieve their feature store credentials with which they can read data directly from RonDB using a JDBC/TLS connection.

While Hopsworks has its own security model, it needs to integrate with the security models of the environments in which it is used.

Hopsworks can run on all three major Cloud providers AWS, Azure and GCP. It is greatly discouraged granting access to cloud resources with access keys, so Hopsworks seamlessly integrates with each provider’s own Identity and Access Management system. More information on docs.hopsworks.ai

IAM roles work great as long as every component resides in the same cloud provider. As soon as you want to integrate with a 3rd party service on another provider or on-premises you will need to use access keys. Having access keys in the code is a prohibitively bad practice as sensitive information might end in a version control system or leak outside the organization. Hopsworks provides a simple to use Secrets storage for securely accessing confidential information.

Hopsworks Enterprise supports single sign-on for Kerberos, LDAP, and OAuth2 identity providers. If you deploy Hopsworks in an environment where users use ActiveDirectory (Kerberos), LDAP, or OAuth2 for single sign-on, when they navigate to the Hopsworks UI, authentication plugins available in Enterprise Hopsworks (SPNEGO for Kerberos, and OpenID for OAuth2) will automatically log users in.

Hopsworks can be integrated with Kubernetes by configuring it to use one of the available authentication mechanisms: API tokens, credentials, certificates, and IAM roles for managed offerings such as AWS EKS. Hopsworks can offload to Kubernetes some of its microservices (Jupyter notebook instances, model serving) and run users’ jobs on Kubernetes. Project specific security material such as X.509 certificates and JWTs will be propagated to launched Pods so user code can access services in Hopsworks: HopsFS, Hive, OpenSearch, and the Hopsworks REST API.

The Hopsworks installer gives you the option to also install the open-source Kubernetes distribution alongside Hopsworks. This is convenient for environments with no existing Kubernetes installation or for testing and development. For this case, our KubernetesCA intermediate CA will issue all Kuberentes internal and etcd certificates.

Programs can also authenticate to the Hopsworks REST API using JWT or API keys. The former expire after a short period of time and the latter can be issued with limited scope, e.g., access only the Feature Store API. As such, API keys are typically used for external access to Hopsworks Feature Store by platforms such as Databricks, AWS Sagemaker, KubeFlow, and AWS EMR.

In this post, we gave an overview of how Hopsworks tackles information security and we introduced our novel project-based multi-tenant security model and the multi-tenant services supported in Hopsworks. Our security model is based primarily on X.509 certificates that come in three types: Project-User certificates, service certificates, and application issued certificates. We also described how we address encryption in transit with TLS, as well as the authentication methods to our web front-end based on JWT and API Tokens and integration with third-party services.

If you would like to learn more about compliance and security at Hopsworks you can read more about our ISO 27001 and SOC2 Type II certifications.